Powering the future of AI

Enterprise-grade datasets for robotics and wearables

High-fidelity 3D datasets for AR/VR, robotics, and autonomous systems. Built for research and production.

Prompt, code, and design from first idea to final product

Built for accelerating model development

Industry-leading datasets designed for cutting-edge research and production systems

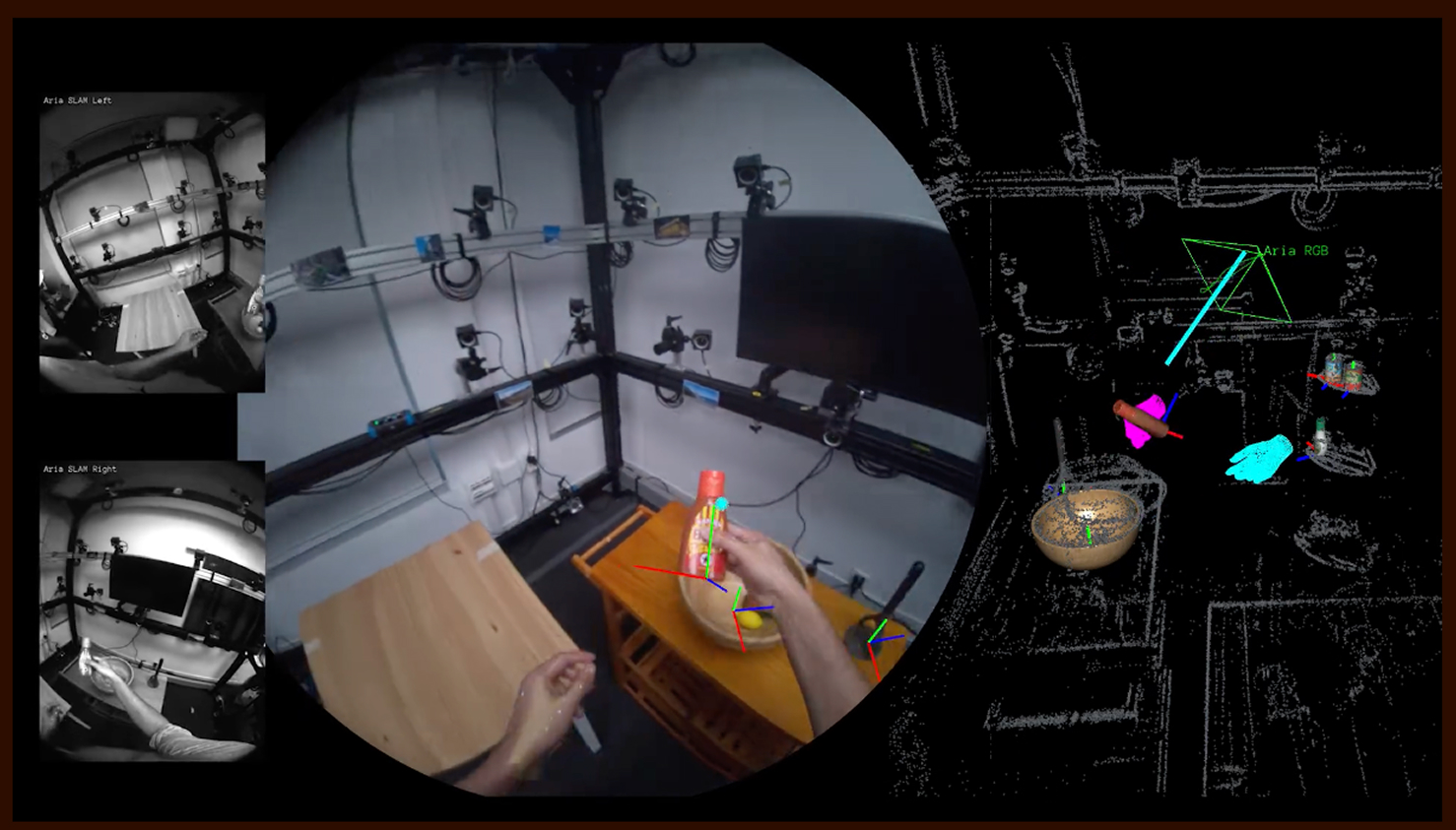

Egocentric vision

High-resolution first-person view datasets captured from AR glasses and wearable cameras for immersive experiences.

3D scene understanding

Precise spatial mapping and object detection for robotics and autonomous systems with millimeter accuracy.

Sensor fusion

Synchronized IMU, depth, and visual data for multi-modal learning applications and complex environments.

Real-time processing

Optimized formats for fast inference and training on edge devices with minimal latency.

Nearly everything that designers and developers need is available in Figma.

Diana Mounter

Head of Design

Explore datasets

Curated collections of high-quality data for robotics and wearable applications

HOT3D

Hand-Object Tracking in 3D

3D hand and object pose tracking from egocentric RGB and depth sequences.

SpatialMap

SCENE RECONSTRUCTION

Dense 3D reconstructions of indoor and outdoor environments with semantic annotations.

WearableSuite

MULTI-MODAL SENSORS

Comprehensive sensor data from AR headsets including eye tracking and audio.

EgoMotion

EGOCENTRIC MOVEMENT

First-person navigation data for SLAM and spatial AI applications.

GraspNet

ROBOTIC GRASPING

Robotic manipulation dataset with diverse objects and grasp poses.

AudioScene

SPATIAL AUDIO

3D spatial audio recordings for immersive audio applications and AR.